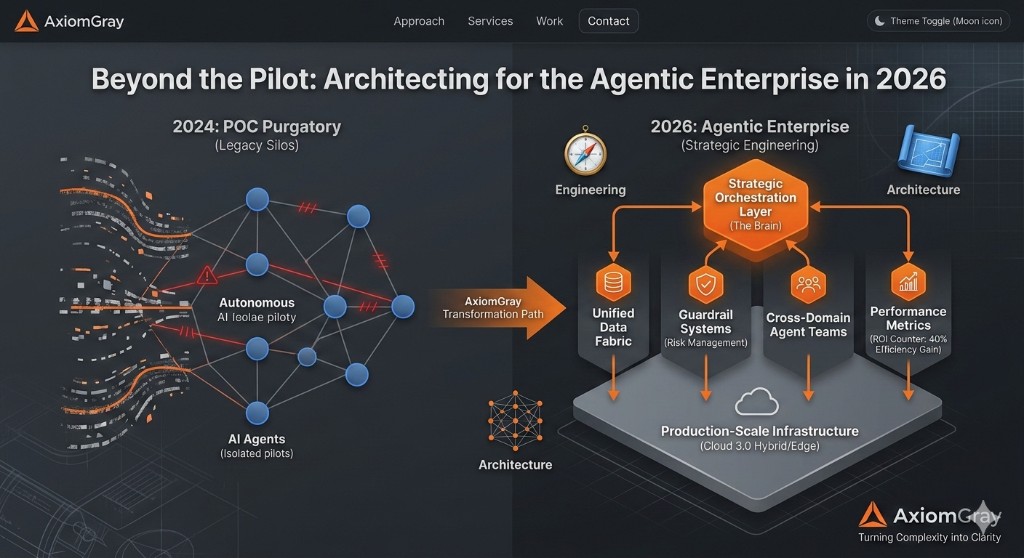

The promise of agentic AI is well established. The delivery record is not. Walk into most enterprise technology organizations today and you will find a graveyard of impressive demonstrations: agents that summarized documents flawlessly in a sandboxed environment, chatbots that answered questions brilliantly until they met production data, workflows that automated a single process while leaving nine others untouched. This is POC Purgatory—and it is where the majority of AI investment dies quietly.

The failure mode is almost never the model. It is the architecture surrounding it.

Why "Automating Broken Processes" Is the Number One Reason Projects Fail

There is a seductive logic to AI automation: identify a repetitive process, drop an agent in front of it, and reclaim the hours. The problem is that most enterprise processes were not designed to be automated. They were designed to be tolerated.

Decades of accumulated workarounds, undocumented exceptions, and tribal knowledge are baked into how your teams actually work. When an AI agent encounters these processes at scale, it does not simplify them—it amplifies their dysfunction. The automation succeeds technically and fails operationally. You have now built a very efficient machine for doing the wrong thing.

The diagnostic question is not "can an agent perform this task?" It is "is this task worth automating in its current form?" Before any orchestration layer is designed, the underlying process must be audited, rationalized, and documented. This is not a technology problem. It is a systems engineering problem.

From Human Execution to Strategic Orchestration

The shift most organizations must make is from task automation to strategic orchestration. These are meaningfully different.

Task automation replaces a human doing a specific action: classifying an email, generating a summary, routing a ticket. It operates at the level of the step.

Strategic orchestration operates at the level of the system. An orchestration layer does not simply execute tasks—it governs which agents are invoked, in what sequence, with what context, and subject to what constraints. It holds the decision logic that determines when a process should escalate to human review, when outputs require validation, and when the cost of an incorrect autonomous action exceeds the cost of intervention.

The orchestration layer is the enterprise brain. The agents are its hands.

Organizations that build durable AI capability in 2026 will not be the ones that deployed the most agents. They will be the ones that invested in the connective tissue: the event buses, the state management systems, the context stores, and the governance frameworks that make agent behavior predictable at scale.

Building Guardrail Architectures for Autonomous Agent Risk

Autonomous agents introduce a category of risk that has no precise analogue in traditional software: emergent failure at decision boundaries. A conventional application either works or it does not. An autonomous agent can work correctly in 95% of cases and fail catastrophically in the 5% where context is ambiguous, data is sparse, or the task sits at the edge of its training distribution.

A Guardrail Architecture addresses this directly. It is not a safety net—it is a structural constraint built into the system design:

Confidence Thresholds. Agents should be designed to declare uncertainty, not just produce outputs. Any action below a defined confidence threshold is automatically queued for human review rather than executed autonomously.

Audit-First Design. Every agent action must produce an immutable trace: what input was received, what decision was made, what action was taken, and why. This is not optional for regulated industries. It is a fundamental requirement for any system claiming to be enterprise-grade.

Bounded Autonomy Zones. Define explicitly the universe of actions an agent is permitted to take, the systems it is permitted to access, and the data it is permitted to write. Agents should operate within the smallest permission footprint consistent with their function. Scope creep in agent permissions is one of the highest-risk failure modes we see in the field.

Rollback-Safe Execution. Where possible, agent actions should be designed as reversible transactions. Write operations should be staged for confirmation before commitment. Irreversible actions should require elevated approval thresholds.

The Architectural Imperative

Enterprise AI in 2026 will be defined less by model capability and more by architectural maturity. The organizations that invest now in coherent orchestration design, principled process rationalization, and robust guardrail frameworks will build a structural advantage that compounds over time.

The question for technology leadership is not whether to build agentic systems. The question is whether to build them on foundations that will hold.